-

Programming Update: Jan 2023 and Feb 2023

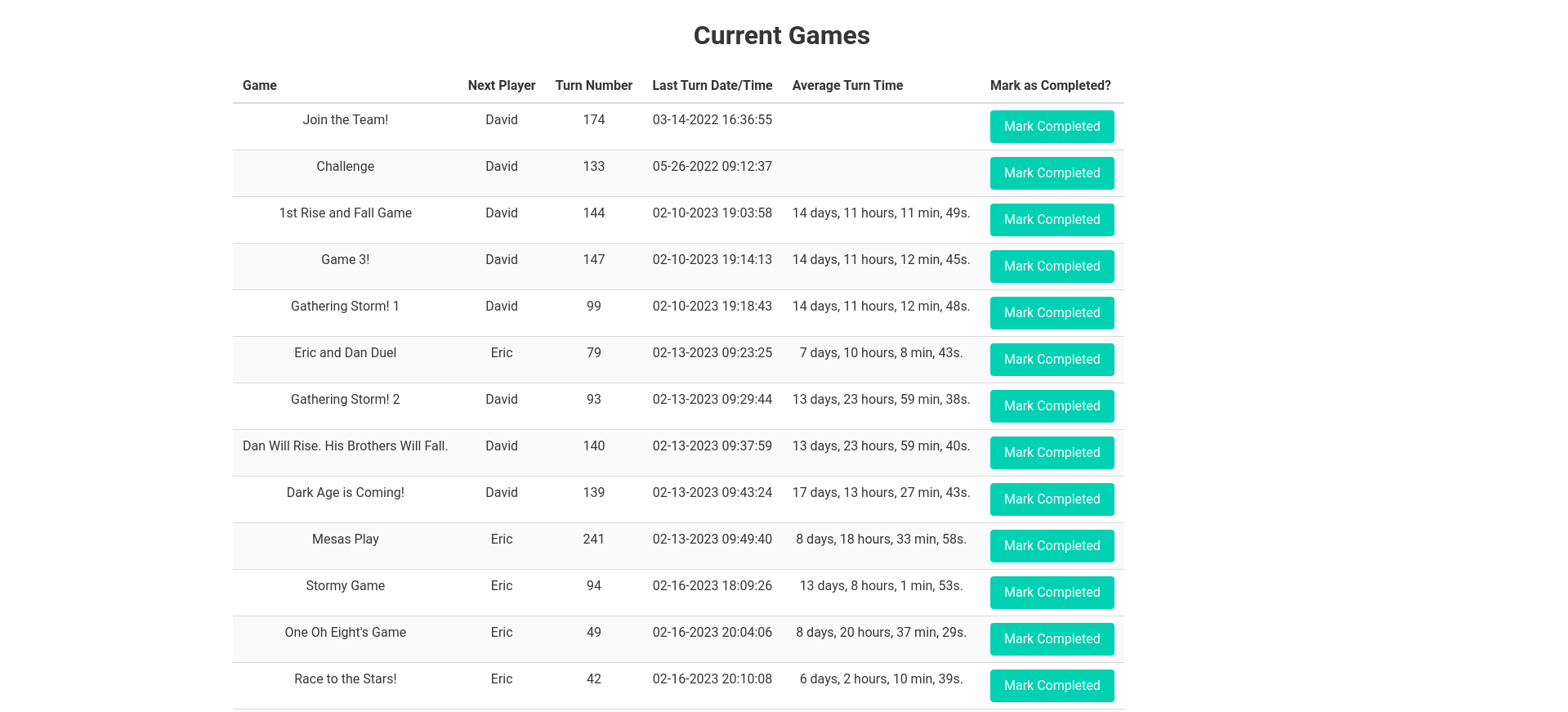

January January was a relatively light programming month for me. I was focused on finishing up end of year blog posts and other tasks. Since Lastfmeoystats is used to generate the stats I need for my end of year music post, I worked on it a little to make some fixes. The biggest fix was…

-

Programming Update May-July 2022

I started working my way back towards spending more time programming as the summer started (in between getting re-addicted to CDProjektRed’s Gwent). I started off by working on my btrfs snapshot program, Snap in Time. I finally added in the ability for the remote culling to take place. (My backup directories had started getting a…

-

My Programming Projects and Progress in 2020

Back in 2019, when I did my programming retrospective I made a few predictions. How did those go? Work on my Extra Life Donation Tracker? Yup! See below! Write more C++ thanks to Arduino? Not so much. C# thanks to Unity? Yes, but not in the way I thought. I only did minor work on…

-

Reviving and Revamping my btrfs backup program Snap-In-Time

If you’ve been following my blog for a long time, you know that back in 2014 I was working on a Python program to create hourly btrfs snapshots and cull them according to a certain algorithm. (See all the related posts here: 1, 2, 3, 4, 5, 6, 7, 8, 9) The furthest I ever…

-

Stratis or BTRFS?

It’s been a while since btrfs was first introduced to me via a Fedora version that had it as the default filesystem. At the time, it was especially brittle when it came to power outages. I ended up losing a system to one such use case. But a few years ago, I started using btrfs…

-

btrfs scrub complete

This was the status at the end of the scrub: [root@supermario ~]# /usr/sbin/btrfs scrub start -Bd /media/Photos/ scrub device /dev/sdd1 (id 1) done scrub started at Tue Mar 21 17:18:13 2017 and finished after 05:49:29 total bytes scrubbed: 2.31TiB with 0 errors scrub device /dev/sda1 (id 2) done scrub started at Tue Mar 21 17:18:13…

-

Speed of btrfs scrub

Here’s the output of the status command: [root@supermario ~]# btrfs scrub status /media/Photos/ scrub status for 27cc1330-c4e3-404f-98f6-f23becec76b5 scrub started at Tue Mar 21 17:18:13 2017, running for 01:05:38 total bytes scrubbed: 1.00TiB with 0 errors So on Fedora 25 with an AMD-8323 (8 core, no hyperthreading) and 24GB of RAM with this hard drive and…

-

Finally have btrfs setup in RAID1

A little under 3 years ago, I started exploring btrfs for its ability to help me limit data loss. Since then I’ve implemented a snapshot script to take advantage of the Copy-on-Write features of btrfs. But I hadn’t yet had the funds and the PC case space to do RAID1. I finally was able to…

-

Exploring Rockstor

I’ve been looking at NAS implementations for a long time. I looked at FreeNAS for a while then OpenMediaVault. But what I really wanted was to be able to take advantage of btrfs and its great RAID abilities – especially its ability to dynamically expand. So I was happy when I discovered Rockstor on Reddit.…

-

Post Script to yesterday’s btrfs post

Looks like I was right about the non-commit and possibly also about the df -h. Last night at the time I wrote the post: # btrfs fi show /home Label: ‘Home1’ uuid: 89cfd56a-06c7-4805-9526-7be4d24a2872 Total devices 1 FS bytes used 1.91TiB devid 1 size 2.73TiB used 1.99TiB path /dev/sdb1 $ df -h Filesystem Size Used Avail…

-

A Quick Update on my use of btrfs and snapshots

Because of grad school, my work on Snap in Time has been quite halting – my last commit was 8 months ago. So I haven’t finished the quarterly and yearly culling part of my script. Since I’ve been making semi-hourly snapshots since March 2014, I had accumulated something like 1052 snapshots. While performance did improve…

-

btrfs needs autodefrag set

When I first installed my new hard drive with btrfs I was happy with how fast things were running because the hard drive was a SATA3 and the old one was SATA2. But recently two things were bugging the heck out of me – using either Chrome or Firefox was painfully slow. It wasn’t worth…

-

Exploring btrfs for backups Part 6: Backup Drives and changing RAID levels VM

Hard drives are relatively cheap, especially nowadays. But I still want to stay within my budget as I setup my backups and system redundancies. So, ideally, for my backup RAID I’d take advantage of btrs’ ability to change RAID types on the fly and start off with one drive. Then I’d add another and go…

-

Exploring btrfs for backups Part 5: RAID1 on the Main Disks in the VM

So, back when I started this project, I laid out that one of the reasons I wanted to use btrfs on my home directory (don’t think it’s ready for / just yet) is that with RAID1, btrfs is self-healing. Obviously, magic can’t be done, but a checksum is stored as part of the data’s metadata…

-

Exploring btrfs for backups Part 4: Weekly Culls and Unit Testing

Back in August I finally had some time to do some things I’d been wanting to do with my Snap-in-Time btrfs program for a while now. First of all, I finally added the weekly code. So now my snapshots are cleaned up every three days and then every other week. Next on the docket is…